RUSSIAN JOURNAL OF EARTH SCIENCES, VOL. 19, ES6003, doi:10.2205/2019ES000695, 2019

S. V. Baranov1, A. D. Gvishiani2,3, C. Narteau4, P. N. Shebalin5

1Kola Branch, Federal Research Center Geophysical Survey, Russian Academy of Sciences, Apatity, Russia

2Geophysical Center of Russian Academy of Sciences, Moscow, Russia

3Institute of the Physics of the Earth, Russian Academy of Sciences, Moscow, Russia

4Université de Paris, Institut de physique du globe de Paris, CNRS, F-75005 Paris, France.

5Institute of Earthquake Prediction Theory and Mathematical Geophysics, Moscow, Russia

Studying the hierarchical structure of the aftershock sequence of the three largest earthquakes of the last decade, we show that the number of offspring events counted in a fixed magnitude band with respect to the magnitude of the parent events follows an exponential distribution. Such an exponential productivity law is coherent with the exponential decays inferred from largest earthquakes worldwide. Epidemic Type Aftershock Sequences (ETAS) are the most popular stochastic models of seismicity and they are all based on a Poisson distribution of the earthquake productivity with a pronounced non-zero mode. We construct here an alternative model incorporating an exponential productivity law. For the three aftershock sequences. we estimate parameters of both models using aftershocks occuring during the first 2 days. We simulate a set of synthetic earthquake catalogues for both models and compare the average cumulated number of events with respect to time. In all cases, the ETAS model overestimates the number of events in the interval from 2 to 365 days. For the same time period, the exponential ETAS model gives a satisfactory cumulative number of events. We conclude that exponential distribution of the earthquake productivity seems to be an important property of the seismic relaxation process.

An important feature of seismicity is the occurrence of space-time clusters demonstrating that earthquakes interact with each other. Focusing on the way in which a sequence of earthquakes develops over space and time, we may consider every event as the trigger for the perturbation of the state of stress in some area around the event. The zones of such a perturbation intersect in time and space, [Gvishiani et al., 2013a; 2013b; 2016], and each new event may be considered as an "offspring" of all preceding earthquakes. For a Poisson process such epidemic behavior may be described as a superposition of the processes, initiated by every event. Since the advent of epidemic models of seismicity, the productivity has become a major issue because it determines the increase in seismicity rate after each earthquake [Helmstetter, 2002; Kagan, 1981; Ogata, 1989]. In these models the number of events triggered by a magnitude $m$ earthquake is considered to vary as a Poisson process of rate $\langle N(m) \rangle=K10^{\alpha m}$. The value $\alpha$ was estimated in a range from 0.5 to 2 [Console et al., 2003; Hainzl and Marsan, 2008; Hainzl et al., 2013; Wang et al., 2010; Werner and Sornette, 2008; Zhuang et al., 2004; Zhuang et al., 2005]. This value is usually close to the observed $b$-value, the slope of the earthquake-size distribution [Helmstetter, 2003].

However, these estimates remain uncertain due to the difficulty of isolating the relative contributions of successive events in a sequence. This task remains in early stage despite the diversity of declustering methods implemented in the past. Two stochastic approaches have been suggested to find causal links within cascades of triggered seismicity. A first approach is to separate the branching structure of earthquake sequences from the background rate using an iterative algorithm based on maximum likelihood estimation of the parameters of an epidemic model of seismicity ETAS [Zhuang et al. 2002]. A second approach is model independent.

A linear contributions of each earthquake to the overall seismicity rate is assumed [Marsan and Helmstetter. 2008]. Those two approaches suppose that every new earthquakes is an "aftershock" of all preceding events, and the goal is to estimate the impact of each preceding event on each subsequent event in terms of probability. An alternative approach [Zaliapin and Ben-Zion, 2013; Zaliapin et al., 2008] goes directly to consider a tree of events in which each event may be a "parent" of several later events, but it can be an "offspring" of only one earlier event. Technically the "parent" is found as a "nearest neighbor" using proximity functions in time-space-magnitude domains [Baiesi and Paczuski, 2004; Zaliapin et al., 2008]. Here we shall call "parent" events triggering events, and their "offsprings" triggered events. All these methods confirm the dependency of the productivity on the magnitude of the triggering event. However, it was found that within this dependency there is a huge variability in the number of triggering events in the seismic catalogs [Marsan and Helmstetter, 2017]. Recently it was found that unexpectedly this variability may be described by exponential distribution [Shebalin et al. 2018] with maximum at 0. This result was obtained for the global statistics of the number of aftershocks from earthquakes of magnitude 6.5 and above. Aftershocks of magnitude above a threshold, defined as the magnitude of the main shock minus 2, were counted. Here we study a distribution of the productivity in a tree of aftershocks for three largest earthquakes of the last decade: 11 March 2011, Mw=9.1, Tohoku earthquake; 27 February 2010, Mw=8.8, Chile earthquake; 11 April 2012, Mw=8.6, Sumatra earthquake. We find that for each sequence the distribution of the productivity also tends to an exponential form. Thus, exponential distribution of earthquake productivity seems to be a general property of seismicity. Exponential distribution of the real number of triggered events contradicts to usually expected form of Poisson distribution. To better understand this issue we compare two models for the three aftershock sequences.

We define earthquake productivity using "delta-analysis" [Zaliapin and Ben-Zion, 2013; Zaliapin et al., 2008], in a magnitude band of a given width $\Delta M$ relative to the magnitude of each triggering event. Thus, the productivity is a property of each earthquake.

To count the productivity values we decompose the earthquake catalogue into a hierarchical tree of pairwise links triggering-triggered events. For each pair of earthquakes $\{i,\,j\}$, we compute the proximity function[Baiesi and Paczuski, 2004],

|

$P_{r - j} = t_{ij}(r_{ij})^{d_{\rm f}}10^{-bm_i}\quad \quad$ for $ \quad t_{ij}>0 \quad $ and $\quad P_{r - j} = + \infty \quad \quad \quad \quad $ for $ \quad t_{ij}\le 0 $ | (1) |

where $t_{ij}=t_j-t_i$ is the inter-event time, $r_{ij}$ the spatial distance between the epicenters, $m_i$ the magnitude of event $i$, $d_{\rm f}$ the fractal dimension of the epicenter distribution and $b$ the slope of the earthquake-size distribution. Using the proximity function (1) for each event we find the preceding nearest-neighbor. In case the $\eta$ value exceeds a threshold $\eta_0$, the event is considered as a background event because it has no triggering event. For each sequence we computed $\eta_0$ using the original technique of [Zaliapin and Ben-Zion, 2013; Zaliapin et al., 2008], approximating the distribution of the nearest-neighbor values $log(\eta)$ by a mixture model of two Gaussian distributions, one modeling independent events, the other causally-related events. We select the $\eta_0$-value for which the two types of errors compensate each other: same probability of having causally-related events with $\eta>\eta_0$ and independent events with $\eta<\eta_0$. Accordingly, all clusters are built from a primary triggering event. There is a single path from any earthquake in a cluster to the corresponding primary event. Primary events by definition are "background events". Background events with the largest magnitude in the cluster are mainshocks. But a triggered event may have a larger magnitude than its triggering events. In this case the main shock is not a primary event and nor background event. Those definitions describe an aftershock sequence as a hierarchical tree of events. This is slightly different from a standard definition of the foreshocks – main shock – aftershock series in which the majority of the aftershocks (and also foreshocks) are linked directly to the main shock. In order to avoid the excessive influence of the definition of the proximity function on our analysis, for each of the three series we consider not a single hierarchical tree of events, including the mentioned earthquakes, but all earthquakes in a simple spatial region with a form of stadium [Baranov and Shebalin, 2017] in a time interval of 365 days after the earthquake. For each triggering event, we count the number $N$ of triggered events at the lower hierarchical level using a relative magnitude threshold $\Delta M$ (i.e. $M_{\rm triggering}-M_{\rm triggered}<\Delta M$). This number $N$ is defined as the productivity.

|

| Figure 1 |

The distribution of the number of triggered events for an earthquake population is defined as the productivity distribution with a mean denoted $\Lambda_0(\Delta M)$, we call the clustering factor. The productivity may vary from place to place, and also from sequence to sequence. Recently it was found [Shebalin et al. 2018] that in a global scale the productivity has a distribution of the exponential shape with maximum at 0. It was also shown that this shape does not depend on the magnitude of the triggering earthquakes nor on the width of the considered magnitude band. For the exponential distribution the clustering factor is an important parameter, as it is a single parameter of this distribution. Here we concentrate on the productivity distribution within aftershock sequences of large earthquakes. It is important to know whether the exponential form is also characteristic in much more homogeneous conditions in comparison to the global variability of the aftershock sequences considered earlier [Shebalin et al. 2018]. The estimated completeness magnitude for the Sumatra and Chile sequences is 4.5, for Tohoku 5.0. For the analysis we use the value $\Delta M=1.5$. This choice allowed to choose the minimum magnitude of triggering earthquakes $M_tr$ 6.0 for the Sumatra and Chile sequences and 6.5 for the Tohoku sequence. Figure 1 shows the histograms of the productivity in comparison to exponential and Poisson distribution. We note that exponential distribution is the distribution of a real value, and the actual productivity is an integer. We may interpret this challenge by supposing that the productivity is an internal property of each earthquake, similar to its magnitude. Specific realizations of the productivity is an integer. It is natural to suppose that the specific values have Poisson distribution with a rate equal to the "internal" productivity. The existing epidemic models of seismicity imply that the internal productivity is constant. The difference between the "internal" and the real productivity may explain some distortion of the exponential distribution. However it is clear from the figure that for all the three sequences exponential distribution is preferable in comparison to Poisson distribution.

We test here whether the found property is important for modeling the seismicity. We compare two epidemic models, the classic ETAS model and a similar model in which the constant internal productivity is replaced by a random internal productivity with exponential distribution. Using an interval of 2 days right after the large earthquakes. Using those estimates we construct two versions of a synthetic catalog. Repeating simulations many times, we calculate an average cumulative number of events as a function of time in the interval 0 to 365 days. Finally we compare the results for the two models with the real data.

The classic ETAS model [Kagan and Knopoff, 1981; Ogata, 1989] considers seismicity as a non-stationary Poisson process with a rate described by the equation (2).

\begin{equation} \tag*{(2)} \lambda_{ETAS}(t) = \mu + K_0 \sum_{t_i < t} \frac{10^{\alpha(M_i - M_0)}}{(t - t_i + c)^p}, \end{equation}where $t_i$ and $M_i$ are the time and the magnitude of the $i$-th earthquake, $\mu$, $K_0$, $\alpha$, $с$ and $p$ are parameters, $M_0$ magnitude threshold for counting events. Parameters $с$ and $p$ describe temporal decay of the triggered events according to the empirical Omori-Utsu law [Utsu, 1965; Utsu, 1970]. Parameter $\alpha$, as discussed above, is usually close to the $b$-value of the Gutenberg-Richter relation. Parameter $\mu$ is the background seismicity rate, which is assumed constant. To estimate parameters of the model we use a standard maximum likelihood procedure [Ogata, 1989]. Earthquake catalogs are not complete right after large earthquakes. To minimize the impact of this effect we omit in the analysis the interval upto 0.05 days after the earthquake.

For the synthetic catalog simulations there are two equivalent ways [Zhuang et al., 2004]. The first is to treat the ETAS model as a single point process, with the probability of an event at each point in time reflected in the conditional intensity which contains a component of background and a component of triggered seismicity contributed by all past events in the history of the process. The second way is to simulate the background events as a Poisson process, and then recursively simulate the aftershocks resulting from each of these background events in turn. Finally, all events are combined and put into the correct temporal order of occurrence. The first way is not appropriate for our modification of the ETAS model, because the internal productivity is random. For this reason we apply here the second way. In both methods the magnitude of events is simulated independently as a random number above $M_0$ with distribution defined by Gutenberg-Richter relation.

We suggest here a simple modification of the ETAS model we shall call EP model. This model modifies the recursive definition of the ETAS model described above. The model represents seismicity as a sum of Poisson processes with the decay according to the Omori law, initiated by background events. Each new event also initiates a similar decaying process. For the synthetic catalogue simulations for each new event we randomly generate its internal productivity $\Lambda_i$ according to the exponential distribution

\begin{eqnarray*} p(\Lambda_i)=\frac{1}{\Lambda_0}e^{\lambda_i/\Lambda_0}. \end{eqnarray*}Then we simulate each branch of the non-stationary process with the rate defined by the equation (3):

\begin{equation} \tag*{(3)} \lambda_{EPi}(t) = \frac{\Lambda_i}{(1+(t-t_i)/c)^p}. \end{equation}In simulations we use Bayesian estimates of the parameters $c$ and $p$ with Gaussian priors [Shebalin and Baranov, 2019] in the interval (0.05,2) days after the large earthquakes. The clustering factor $\Lambda_0$ is also estimated in this interval simply as the average productivity. This number is corrected to the interval of 365 days using a multiplier

\begin{eqnarray*} U=\frac{U(0.05,365)}{U(0.05,2)}, \end{eqnarray*}where $U(a,b)=\int_a^b{(1+x/c)^{-p}dx}$. Like in ETAS model, magnitudes of events are independently assigned according to the Gutenberg-Richter relation. We use a Bayesian estimate of the $b$-value with Gaussian priors [Shebalin and Baranov, 2019].

We used data from ANSS ComCat earthquake catalog provided by USGS. Aftershocks of M8.8 Chile earthquake of 2010 were taken for a year after the mainshock from the circle of radius 900 km surrounding its epicenter. Aftershocks of M9.1 Tohoku earthquake of 2011 were taken for a year after the mainshock from the circle of radius 1000 km surrounding its epicenter. Aftershocks of M8.6 Sumatra were taken for a year after the mainshock from the circle of radius 700 km surrounding its epicenter. We did not apply any other special aftershock selection procedure.

|

| Figure 2 |

|

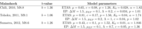

| Table 1 |

For each of the three sequences we have performed 2500 simulations of the synthetic catalogs using the two models. We estimated ETAS and EP models parameters using data for 2 days after the mainshocks (Table 1). The average cumulative number of event has been calculated as a function of time. Results are shown in Figure Figure 2.

We see that the ETAS model gives a drastic overestimation of the earthquake rates at later times, while the EP model is quite acceptable in the whole forecasting period from 2 to 365 days. Two major reasons explain this as we can suppose. First, values following the exponential distribution are statistically smaller than values of the Poisson distribution with the same mean value. Thus, the EP model should predict smaller rates in comparison to the ETAS model. Second, in all three cases the background rate of the ETAS model estimated at the beginning of the sequence is much higher than estimated in the interval of 365 days. In the EP model the calculations of the background rate give similar values for the beginning and for the whole sequence. One of interesting results of this paper is exponential form of the productivity distribution within single aftershock sequences from large earthquakes. Earlier this property was established in global and regional scales [Shebalin et al. 2018; Shebalin and Baranov, 2019]. The obtained results demonstrate advantages of the suggested modification of the ETAS model. It is too early to come to final conclusions about those two model, however we obviously may conclude that exponential shape of the productivity distribution is an important property of the seismic process.

Baiesi, M., M. Paczuski (2004) , Scale-free networks of earthquakes and aftershocks, Phys. Rev. E, 69, p. 066106, https://doi.org/10.1103/PhysRevE.69.066106.

Baranov, S. V., V. A. Pavlenko, P. N. Shebalin (2019) , Forecasting Aftershock Activity: 4. Estimating the Maximum Magnitude of Future Aftershocks, Izv., Phys. Solid Earth, 55, p. 548–562, https://doi.org/10.1134/S1069351319040013.

Baranov, S. V., P. N. Shebalin (2017) , Forecasting aftershock activity: 2. Estimating the area prone to strong aftershocks, Izv., Phys. Solid Earth, 53, p. 366–384, https://doi.org/10.1134/S1069351317020021.

Console, R., M. Murru, A. M. Lombardi (2003) , Refining earthquake clustering models, J. Geophys. Res.: Solid Earth, 108, https://doi.org/10.1029/2002JB002130.

Gvishiani, A., M. Dobrovolsky, S. Agayan, et al. (2013a) , Fuzzy-based clustering of epicenters and strong earthquake-prone areas, Environmental Engineering and Management Journal, 12, no. 1, p. 1–10, https://doi.org/10.30638/eemj.2013.001.

Gvishiani, A., B. Dzeboev, S. Agayan (2013b) , A new approach to recognition of the earthquake-prone areas in the Caucasus, Izvestiya, Physics of the Solid Earth, 49, no. 6, p. 747–766, https://doi.org/10.1134/S1069351313060049.

Gvishiani, A., B. Dzeboev, S. Agayan (2016) , FCAZm intelligent recognition system for locating areas prone to strong earthquakes in the Andean and Caucasian mountain belts, Izvestiya. Physics of the Solid Earth, 52, no. 4, p. 461–491, https://doi.org/10.1134/S1069351316040017.

Hainzl, S., D. Marsan (2008) , Dependence of the omori-utsu law parameters on main shock magnitude: Observations and modeling, J. Geophys. Res.: Solid Earth, 113, https://doi.org/10.1029/2007JB005492.

Hainzl, S., O. Zakharova, D. Marsan (2013) , Impact of aseismic transients on the estimation of aftershock productivity parameters, Bull. Seismol. Soc. Am., 103, p. 1723–1732, https://doi.org/10.1785/0120120247.

Helmstetter, A. (2003) , Is earthquake triggering driven by small earthquakes?, Phys. Rev. Lett., 91, p. 058501, https://doi.org/10.1103/PhysRevLett.91.058501.

Helmstetter, A., D. Sornette (2002) , Subcritical and supercritical regimes in epidemic models of earthquake aftershocks, J. Geophys. Res.: Solid Earth, 107, p. 2237, https://doi.org/10.1029/2001JB001580.

Holschneider, M., G. Zöller, S. Hainzl (2011) , Estimation of the maximum possible magnitude in the framework of the doubly-truncated gutenberg-richter model, Bull. Seimol. Soc. Am., 112, p. 1649–1659, https://doi.org/10.1785/0120100289.

Kagan, Y. Y., L. Knopoff (1981) , Stochastic synthesis of earthquake catalogs, J. Geophys. Res.: Solid Earth, 86, p. 2853–2862, https://doi.org/10.1029/JB086iB04p02853.

Marsan, D., A. Helmstetter (2017) , Single-link cluster analysis as a method to evaluate spatial and temporal properties of earthquake catalogues, J. Geophys. Res.: Solid Earth, 122, p. 5544–5560, https://doi.org/10.1002/2016JB013807.

Marsan, D., O. Lenglin{é} (2008) , Extending earthquakes{\textquoteright} reach through cascading, Science, 319, p. 1076–1079, https://doi.org/10.1126/science.1148783.

Ogata, Y. (1989) , Statistical model for standard seismicity and detection of anomalies by residual analysis, Tectonophysics, 169, p. 159–174, https://doi.org/10.1016/0040-1951(89)90191-1.

Shebalin, P. N., S. V. Baranov (2019) , Forecasting Aftershock Activity: 5. Estimating the Duration of a Hazardous Period, Izv., Phys. Solid Earth, 55, p. 719–732, https://doi.org/10.1134/S1069351319050112.

Shebalin, P. N., S. V. Baranov, B. A. Dzeboev (2018) , The Law of the Repeatability of the Number of Aftershocks, Dokl. Earth Sc., 481, p. 963–966, https://doi.org/10.1134/S1028334X18070280.

Utsu, T. (1965) , A method for determining the value of b in a formula {$log n = a - bM$} showing the magnitude-frequency relation for earthquakes (with English summary), Geophys Bull. Hokkaido Univ., 13, p. 99–103.

Utsu, T. (1970) , Aftershocks and earthquake statistics (ii): Further investigation of aftershocks and other earthquake sequences based on a new classification of earthquake sequences, J. Faculty Sci., Hokkaido University, Ser. VII (Geophys.), 3, p. 197–266.

Wang, Q., F. P. Schoenberg, D. D. Jackson (2010) , Standard errors of parameter estimates in the etas model, Bull. Seismol. Soc. Am., 100, p. 1989–2001, https://doi.org/10.1785/0120100001.

Werner, M. J., D. Sornette (2008) , Magnitude uncertainties impact seismic rate estimates, forecasts, and predictability experiments, J. Geophys. Res.: Solid Earth, 113, https://doi.org/10.1029/2007JB005427.

Zaliapin, I., Y. Ben-Zion (2013) , Earthquake clusters in southern california i: Identification and stability, J. Geophys. Res.: Solid Earth, 118, p. 2847–2864, https://doi.org/10.1002/jgrb.50179.

Zaliapin, I., A. Gabrielov, V. Keilis-Borok, H. Wong (2008) , Clustering analysis of seismicity and aftershock identification, Phys. Rev. Lett., 101, p. 018501, https://doi.org/10.1103/PhysRevLett.101.018501.

Zhuang, J., C.-P. Chang, Y. Ogata, et al. (2005) , A study on the background and clustering seismicity in the taiwan region by using point process models, J. Geophys. Res.: Solid Earth, 110, https://doi.org/10.1029/2004JB003157.

Zhuang, J., Y. Ogata, D. Vere-Jones (2002) , Stochastic declustering of space-time earthquake occurrences, J. Am. Stat. Ass., 97, p. 369–380, https://doi.org/10.1198/016214502760046925.

Zhuang, J., Y. Ogata, D. Vere-Jones (2004) , Analyzing earthquake clustering features by using stochastic reconstruction, J. Geophys. Res.: Solid Earth, 109, https://doi.org/10.1029/2003JB002879.

Received 20 September 2019; accepted 22 November 2019; published 30 November 2019.

Citation: Baranov S. V., A. D. Gvishiani, C. Narteau, P. N. Shebalin (2019), Epidemic type aftershock sequence exponential productivity, Russ. J. Earth Sci., 19, ES6003, doi:10.2205/2019ES000695.

Copyright 2019 by the Geophysical Center RAS.